I was recently examining Google+ and how it transparently redirects through plus.google.com as it sends readers to your website. One of the oddities I noticed was this:

https://plus.google.com/url?sa=z&n=1310267970417&url=http%3A%2F%2Fwww.christopherspenn.com%2F2011%2F07%2Fhow-to-measure-google-plus-with-analytics%2F&usg=Fl5VGX6zftZiPhe6N1gENpM0EDQ.

Take a look at how the URL starts. The second query string variable looks awfully familiar. If you’re not familiar with query string variables, they’re the key/value pairs in the URL that have an equal sign. For example, let’s break up that G+ URL into key/value pairs:

- https://plus.google.com/url?

- sa=z

- n=1310267970417

- url=http%3A%2F%2Fwww.christopherspenn.com%2F2011%2F07%2Fhow-to-measure-google-plus-with-analytics%2F

- usg=Fl5VGX6zftZiPhe6N1gENpM0EDQ.

URL is pretty obvious, that’s the URL to the post I shared about Google+ Analytics.

USG appears to be a hash of some kind, but none of the usual reverse hash hacking tools made sense of it, which means it’s probably just a unique identifier, such as which Google+ post the URL was linked from.

SA is their standard URL discriminator; Z appears to be the type assigned to Google+.

That leaves us with the mystery of N. In the example above, N is a 13 digit number, 1310267970417. At first glance, it doesn’t appear to be anything, but if you look closely, it resembles a UNIX timestamp. Feed it into a UNIX timestamp calculator, however, and it returns a senseless result:

Sat, 27 Sep 43490 13:06:57 GMT

However, I poked around a bit more and did a regular date-time to UNIX conversion. The day and time this post was written is: 1311151020, which is only 10 digits long. So what if we trimmed the mysterious G+ number down from the right to match the same number of digits as a current date UNIX timestamp? 1310267970 turns into:

Sun, 10 Jul 2011 03:19:30 GMT

Now isn’t that interesting? That’s closer to the time that I posted the article. Here’s where it gets funky. I went to that post and clicked through just now, as I was writing this. The N variable now reads: 1311165558. That’s just seconds ago.

Wed, 20 Jul 2011 12:39:18 GMT

Google+ is assigning a UNIX timestamp with an extra three digits – I’m guessing a sort of microtime – to every outbound click from G+ at the time of the click. Let me state that again: they’re uniquely timestamping every CLICK from G+ in the URL in realtime. Not just when a post was shared, not just when a post was reshared, but Every. Single. Click.

Here’s where we get into tinfoil hat territory. There’s no logical reason to be timestamping clicks for things like spam control or malware control. You can, and they do in other places, just shut down the destination URL or redirect it to a warning page.

So why would G+ be timestamping every outbound click? This is pure speculation, but the only reason I can think of is that you’d want to track velocity on a link’s popularity. You’d want to track not only how often was it shared or reshared, but how engaged were people with the link, and over what period of time. When I post a link on Google+, it seems that G+ is measuring when clicks occur relative to that content – how popular it is over any given period of time.

We’ve known for a while, we’ve read in many places, that Google is using social signals to influence search. What we have here may be the next iteration of that. Twitter’s data feed with Google came to an end, but they’ve beefed up their social base with G+, and if they’re timestamping every single click, that data can be used to assess the validity of content and the virality of it in a very tight, compact fashion that any data analysis tool can process. Further, by putting the timestamp data right in the URL, they may be making it easy for other Google properties like Google Analytics to process G+ data with a minimum of overhead.

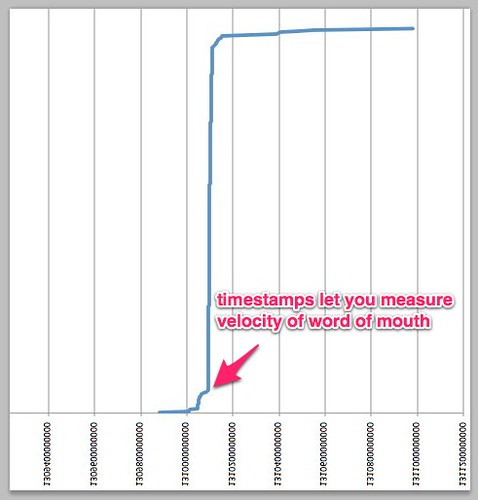

How easy are they making it? Using my existing Google+ data, this is my Google+ analytics blog post mapped in Excel using the timestamps from G+:

Notice that with this explicit timestamping, I can measure exactly when the clicks to the article really started to take off, and then when they plateaued again. Google is paying attention to this data, so it’s probably a good idea for you to pay attention to it as well.

If you don’t know how to collect this data… well, stay tuned. Tomorrow I’ll show you.

You might also enjoy:

- You Ask, I Answer: AI Works And Copyright?

- Almost Timely News: Recipes vs. Principles in Generative AI (2024-03-03)

- You Ask, I Answer: Retrieval Augmented Generation for Tax Law?

- Mind Readings: What Makes A Good Conference/Event?

- You Ask, I Answer: Legality of Works in Custom GPTs?

Want to read more like this from Christopher Penn? Get updates here:

Take my Generative AI for Marketers course! |

Leave a Reply