Search engine optimization, or SEO, has changed significantly in the past few years. Thanks to the power of machine learning and artificial intelligence, the only way to build a sustainable, long-term SEO strategy is to create content people want to share. To combat these new trends, we need to employ our own machine learning technology to find what works and perform modern SEO at scale.

In this post, we’ll look at step 5: extraction.

Extraction and Topic Modeling

Recall that in earlier posts, when we were classifying content by search metrics, we looked at rank and authority as two meta-factors. Rank reflects our ability to generate interest from the outside in (off-site); authority reflects our ability to create powerful content from the inside out (on-site).

To ensure we have something worth ranking for, we need authoritative content. The challenge is, what constitutes authoritative content?

The search algorithms which power modern search engines like Google and Bing have become so complex that no one human being understands how the various machine learning pieces truly work. This complexity helps Google achieve better, more relevant results, but at the expense of understanding what’s inside the magic box.

For search marketers, this also means that we cannot rely on simplistic SEO strategy. We are unlikely to be able to outthink the machines.

Instead, we must deploy our own machines to reverse-engineer search results, grouping the top search results together to understand what language they have in common.

Extraction Process

Using machine learning tools such as topic modeling and text mining, we extract search results’ textual content to determine the language in common on the top results. We don’t need to know the algorithm behind what makes those results relevant and authoritative; we simply need to know what language we cannot afford to omit if we want to create authority for our chosen topics.

Let’s use our example from the previous post on robotic process automation and extract the text from our top search results. For a search engine like Bing, use the API. For a search engine like Google, adhere to the terms of service (automated scraping is prohibited) and perform the extraction manually.

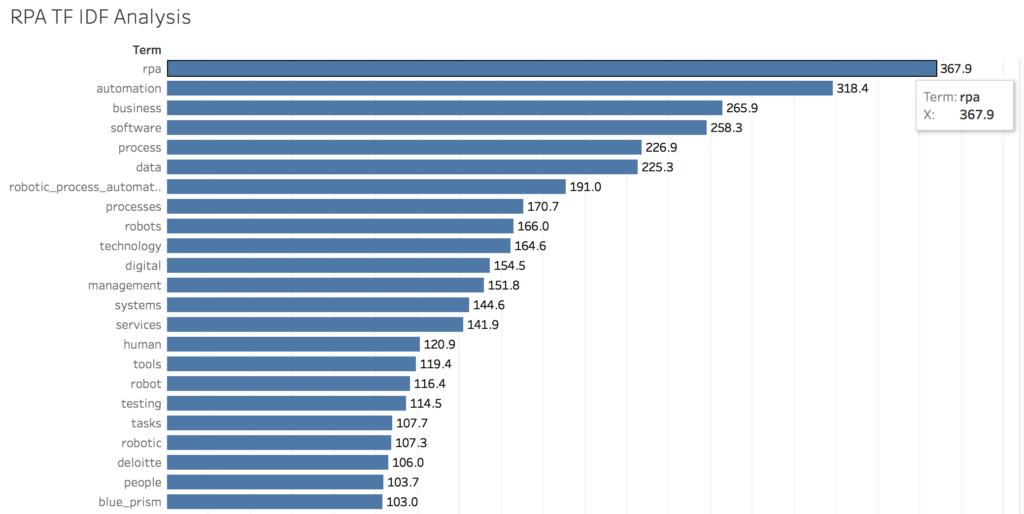

Using R and the Quanteda text mining package, we extract out the top terms and phrases used on the top-ranking pages, refine and engineer a dictionary to catch multi-word phrases, then score them using TF-IDF, the term frequency/inverse document frequency algorithm:

We now have an excellent idea of the relevant terms common to all these pages. Any page we create that we seek authority for should use a majority of these terms to ensure that our content is as authoritative as possible, while still preserving natural language.

Next: Intent Timing

At this point, we should have relevant, high-authority content. We’ve spent a lot of time researching what should be on our site, from themes and topics to keywords and text. We next begin the journey to outside, starting with understanding audience intent timing. Stay tuned!

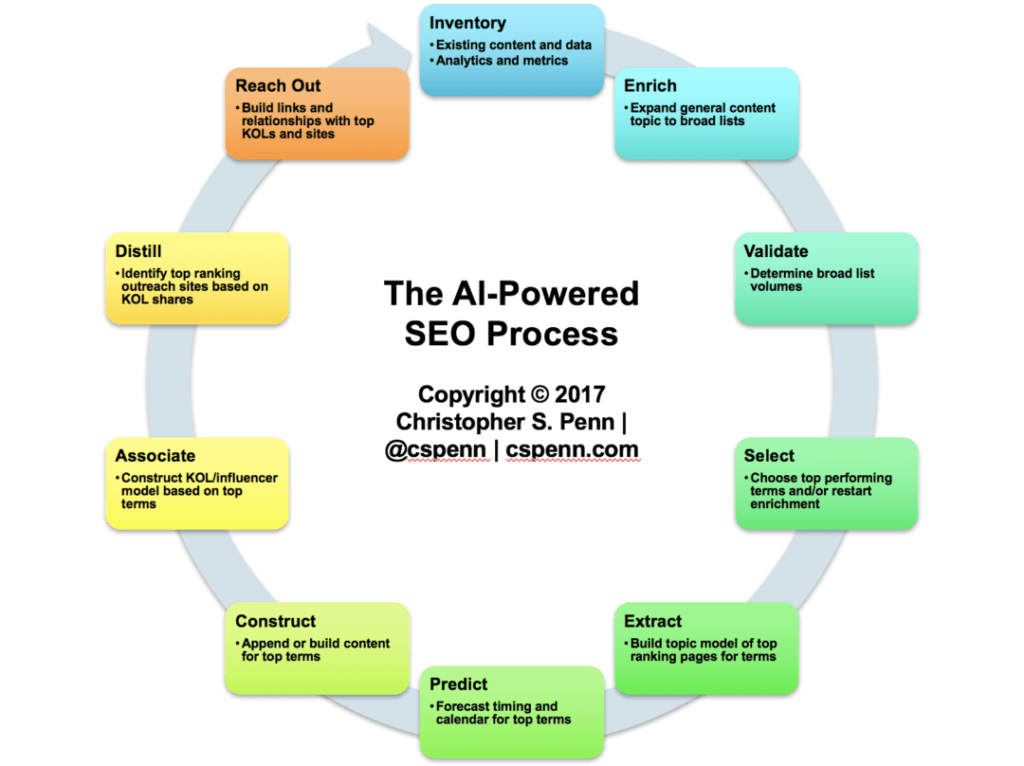

The AI-Powered SEO Process

- Step 1: Inventory – understand what we have and how it performs.

- Step 2: Enrich – building off what we have, see what else is out there

- Step 3: Validate and Select – check that we’re working towards high-volume topics, make choices from the topic buffet of what to pursue

- Step 4: Extract – determine what else makes the top performing pages work so well from a content and topic perspective

- Step 5: Predict – understand the seasonality of our chosen topic

- Step 6: Construct – improve or create new content for our chosen topic

- Step 7: Associate – explore Key Opinion Leaders (KOL) who are influential in our topic’s space

- Step 8: Distill & Reach Out – understand what sites our KOLs share and their search authority, pitch our new content to our KOLs and their media sites of choice

- Step 9: Wrap Up/Conclusion – review where we’ve been

You might also enjoy:

- Almost Timely News: Principles-Based Prompt Engineering (2024-02-25)

- Almost Timely News, February 4, 2024: What AI Has Made Scarce

- You Ask, I Answer: AI Music Collaborations and Copyright?

- Mind Readings: You Need Passwords for Life in the Age of Generative AI Fraud

- Almost Timely News, February 11, 2024: How To Evaluate a Generative AI System

Want to read more like this from Christopher Penn? Get updates here:

Take my Generative AI for Marketers course! |

Leave a Reply